博客内容Blog Content

训练CNN模型下五子棋 Training a CNN Model for Gomoku

训练一个CNN模型对当前五子棋盘面评估并预测下一步,并对模型进行评估优化 Train a CNN model to evaluate the current Gomoku board state and predict the next move, and further evaluate and optimize the model.

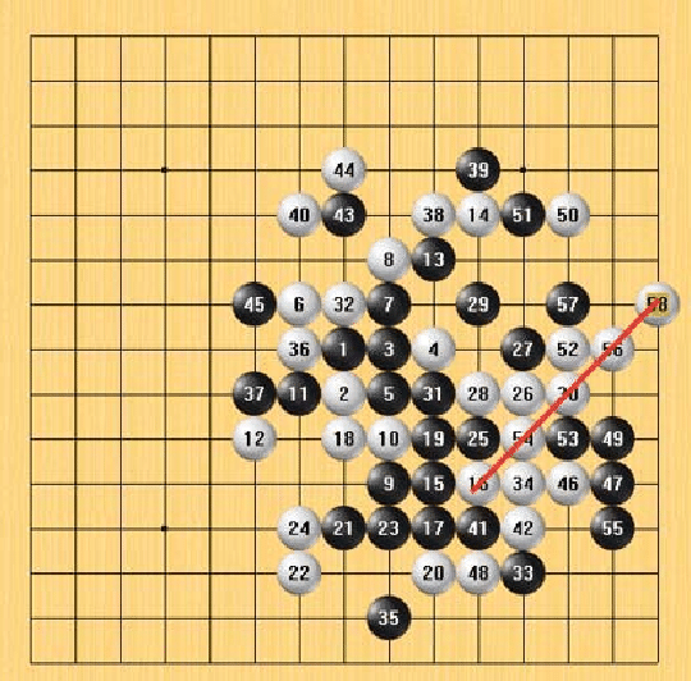

Background

Gomoku (Five-in-a-Row, 五子棋) is a strategy board game where two players take turns placing black and white pieces on a board, aiming to be the first to get five in a row horizontally, vertically, or diagonally.

Gomoku strategy involves both offensive and defensive tactical patterns, such as creating open rows of three or four stones (known as "open three" or "open four") while simultaneously blocking the opponent’s potential winning lines.

With the rise of artificial intelligence, machine learning models are increasingly being used to predict the next optimal move, analyze board states, and even challenge human players.

Data Source

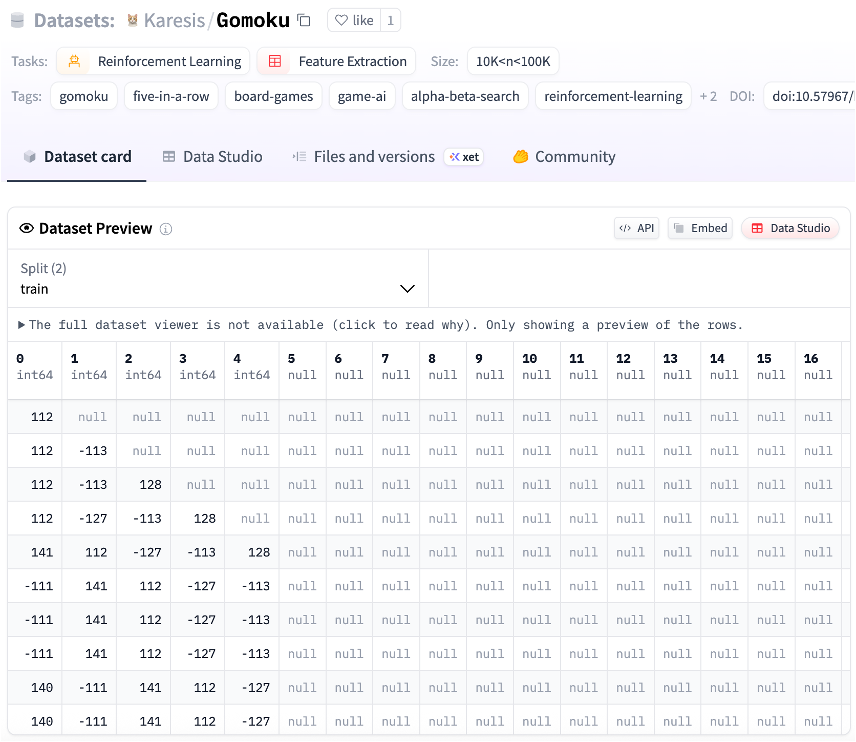

Open-source game record: https://huggingface.co/datasets/Karesis/Gomoku

Dataset description:

The Dataset contains board states and moves from 875 self-played Gomoku games, totaling 26,378 training examples.

The data was generated using WinePy, a Python implementation of the Wine Gomoku AI engine.

Each example consists of a board state and the corresponding optimal next move as determined by an alpha-beta search algorithm with pattern recognition.

Development Steps

Documents & Code Link: https://github.com/luguanxing/Gomoku-AI-Master

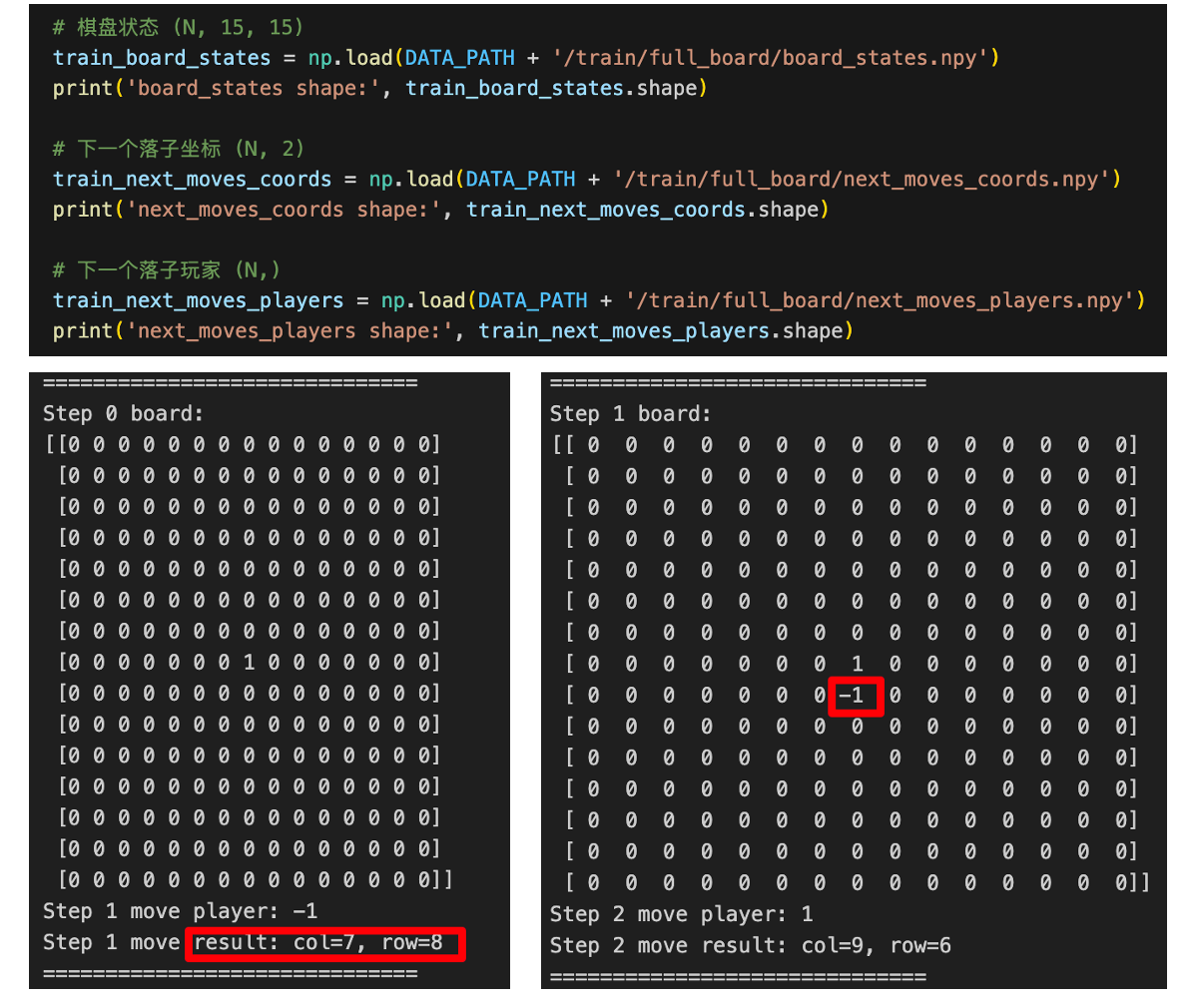

1. Load Data

The first step it to load data.

Each row contains data like current board, next player, next move.

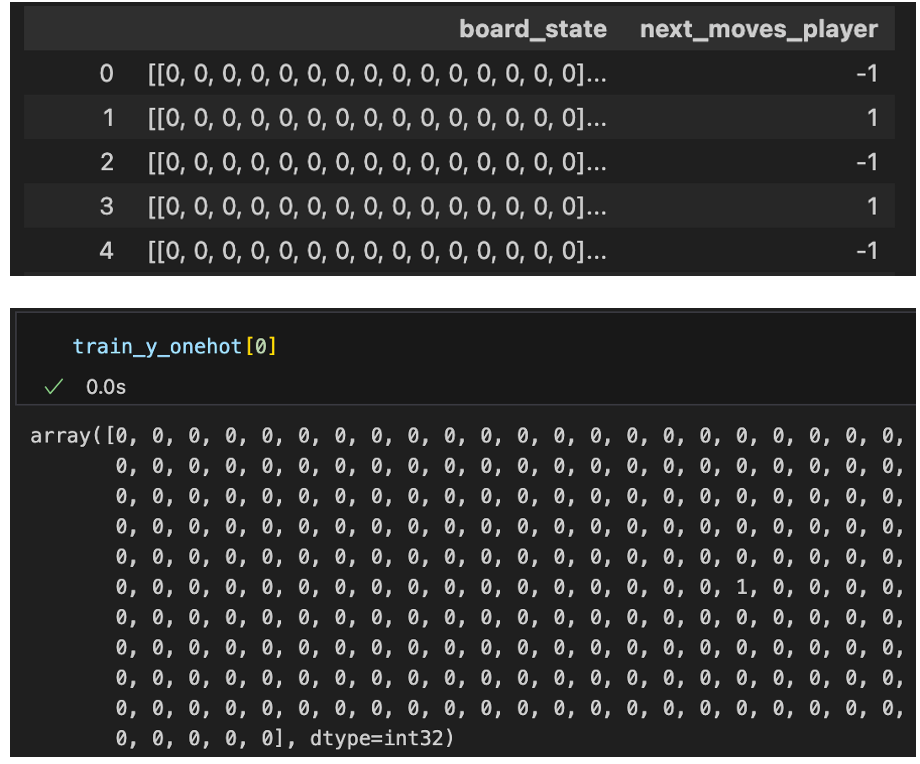

2. Process Data

The second step is to process data.

We one-hot map the data: input= 1(player's turn) + 15×15(times representing the board state); and output = 15×15(location of next move).

In this part, we can also do data cleansing, removing illegal moves data.

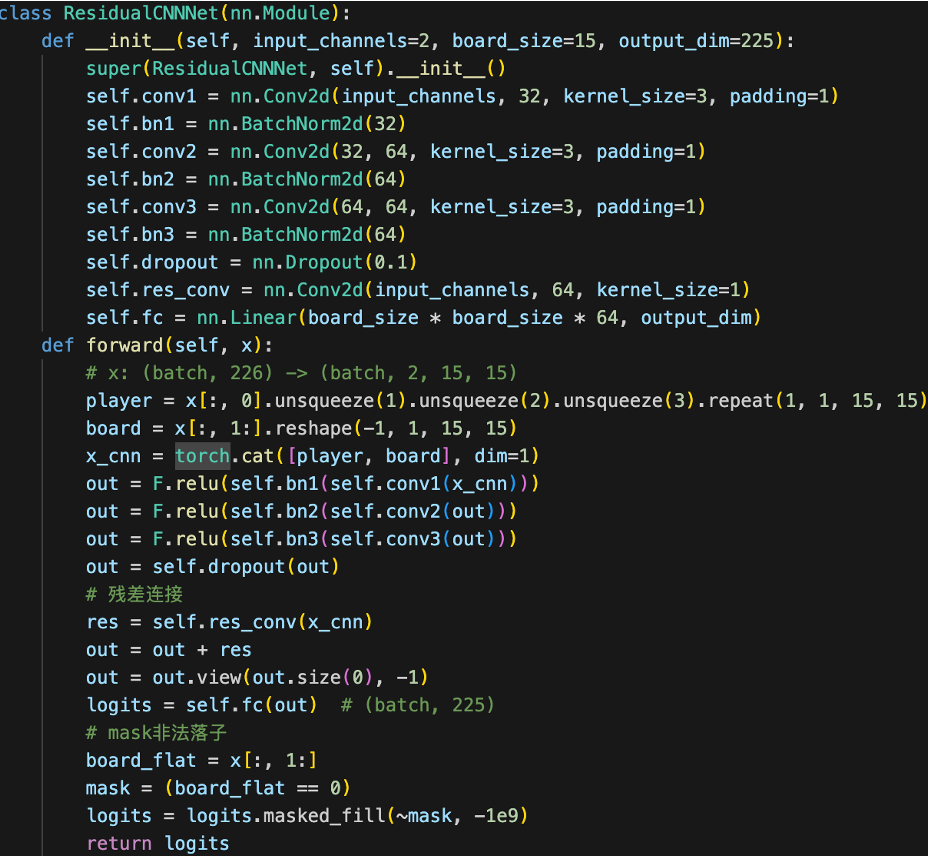

3. Define Neural Network

The third step is to build the network.

Here we can try different networks, and we can use techniques like dropout, residual connections, masking to improve our network.

Finally, we can define these networks to return logits for our prediction.

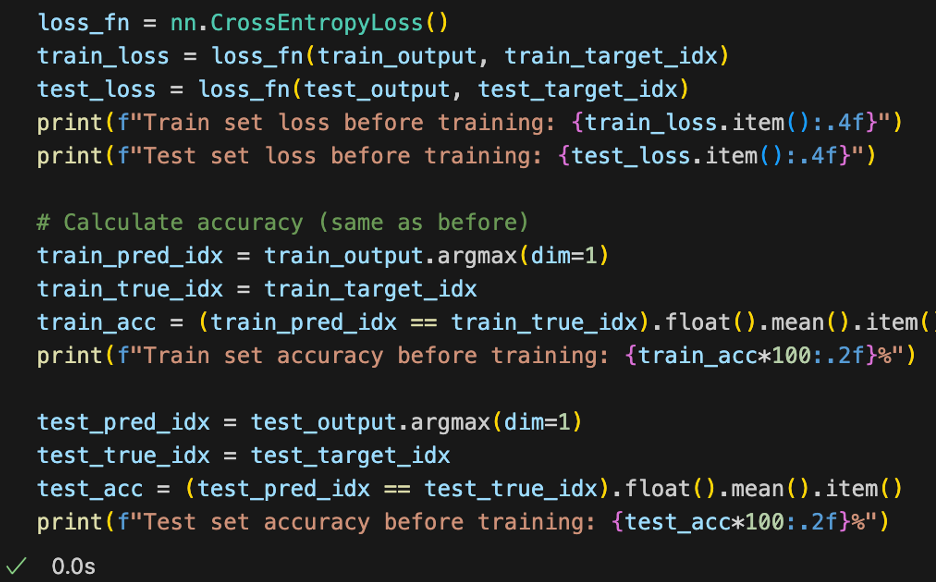

4. Validation Before Training

Now, before we train the model.

We need to use our untrained models to predict for loss value and accuracy, so that we can compare the results later after training.

By doing this, we can know what the improvement is, how much it has improved.

For example, before training, we have a loss value(Cross-Entropy Loss) about 5 for CNN, and accuracy(Right Position Cases/All Cases) of 0.003.

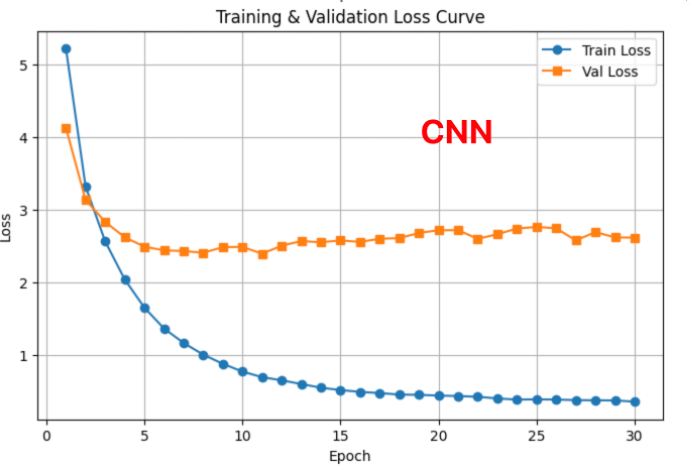

5. Training

Then, we can start training the model.

We can draw the loss and accuracy charts during training.

Noticeably, different models may have different charts, for example, as we keep training, CNN loss value will not increase, But accuracy value plateau.

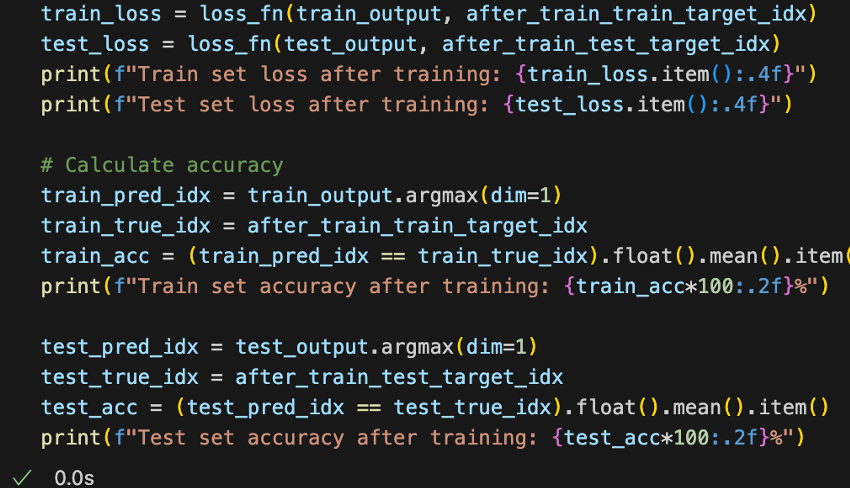

6. Validation After Training

If we finish training, we can compare the result of before and after.

For the test set, before training, we have loss value of 5, now NN is 13, CNN is 2.9.

For accuracy, before is 0, now we have around 50% for NN and CNN So we can see there is a huge improvement in accuracy.

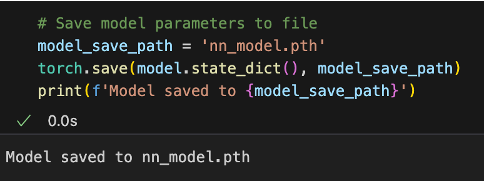

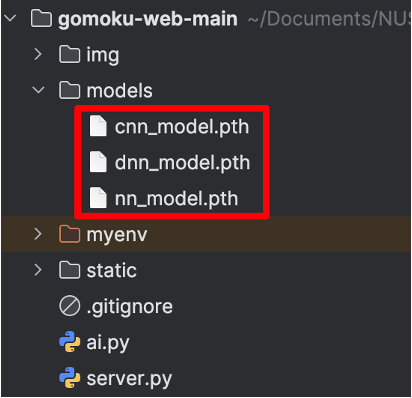

7. Save Model

After training, we can also save the models, so that we can use them for web Server deployment.

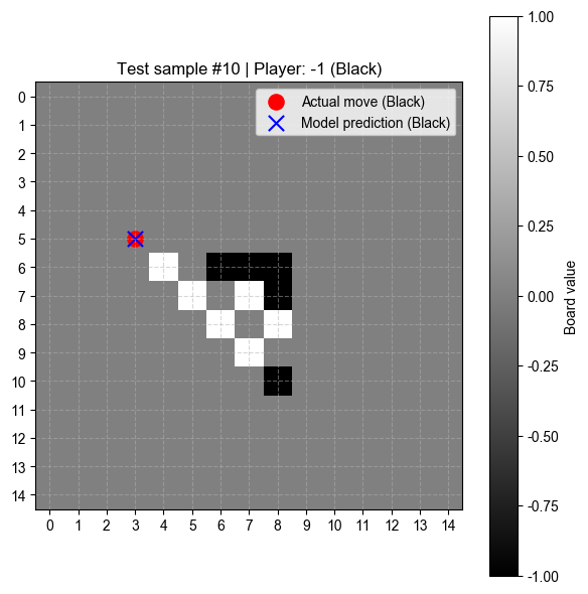

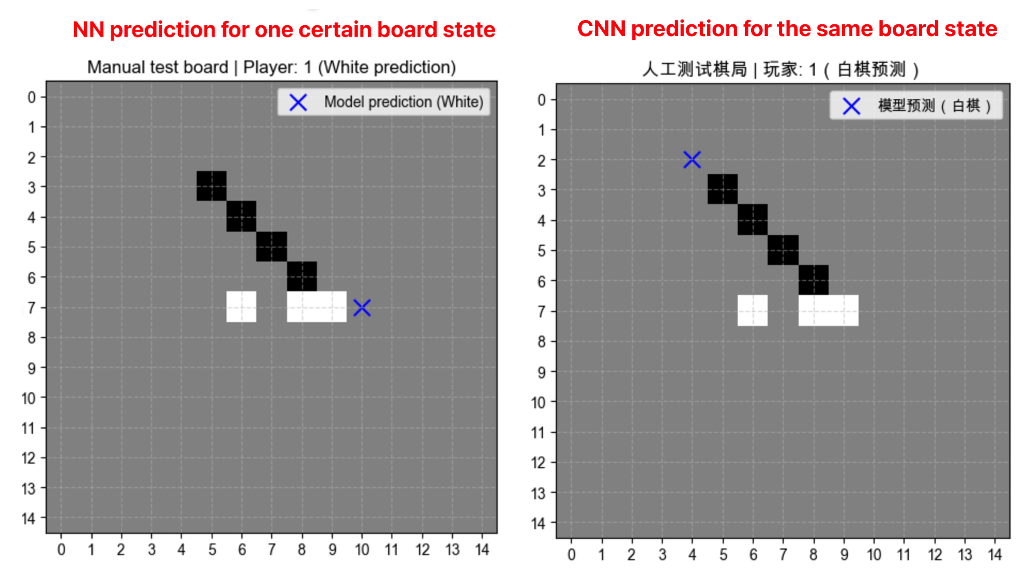

8. Manual Test

Finally, we still need to do some manual tests.

Because high score doesn’t necessarily mean it is smart.

We verify next move by setting the board manually and see the results.

Optimaztion: Reinforcement Learning

We can train a reinforcement learning agent through self-play against a frozen opponent, using reward shaping and periodic evaluation to iteratively improve policy performance.

Alternate the agent’s play as first or second player, sampling moves from its policy during training and choosing greedily during evaluation.

Apply reward shaping by granting bonuses for immediate victories and penalties for exposing one-move win threats to accelerate learning.

Regularly evaluate the agent’s performance against both a random opponent and the frozen model.

Update the frozen opponent to the improved policy when the agent consistently outperforms it, maintaining continual challenge.

Repeat self-play and evaluation cycles, enabling the agent to learn and adapt beyond supervised data through iterative self-competition.