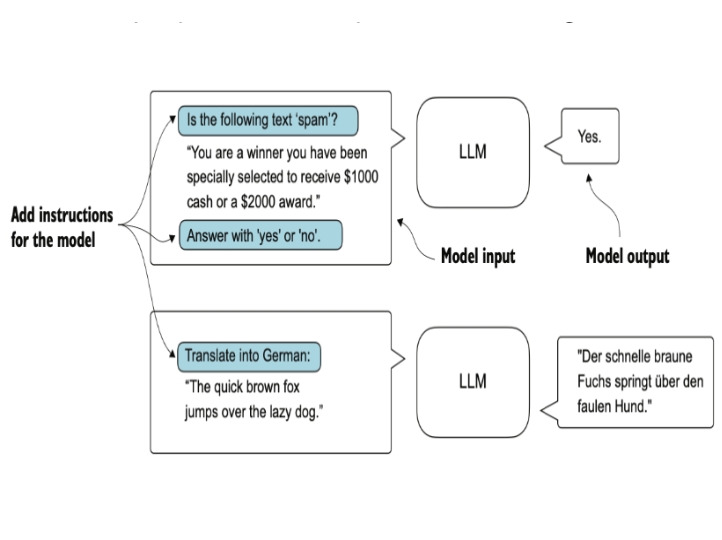

大语言模型LLM之为完成指令任务进行微调 Fine-Tuning for Following Instructions of Large Language Models (LLMs)

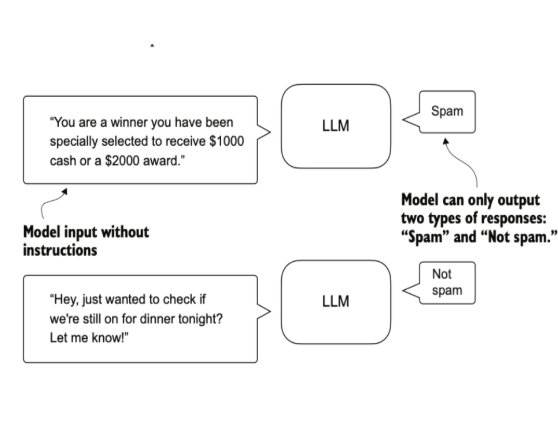

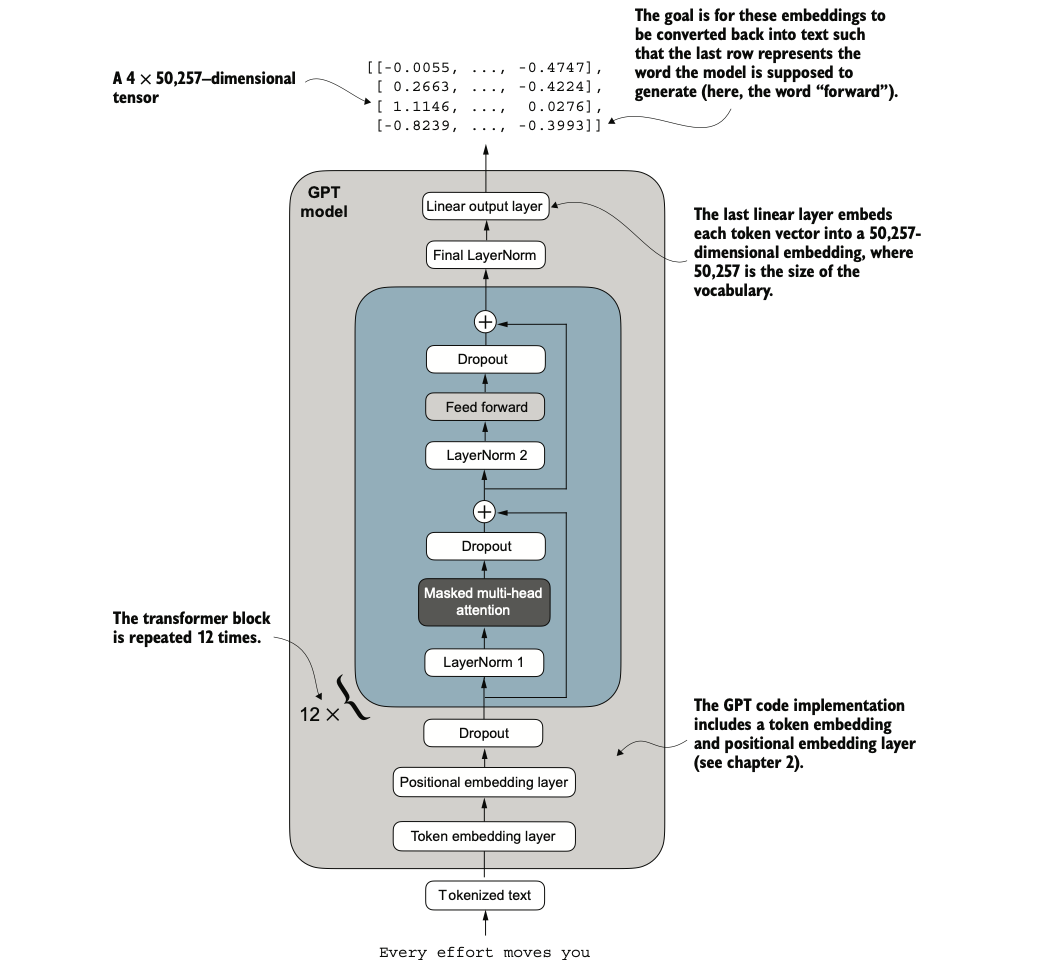

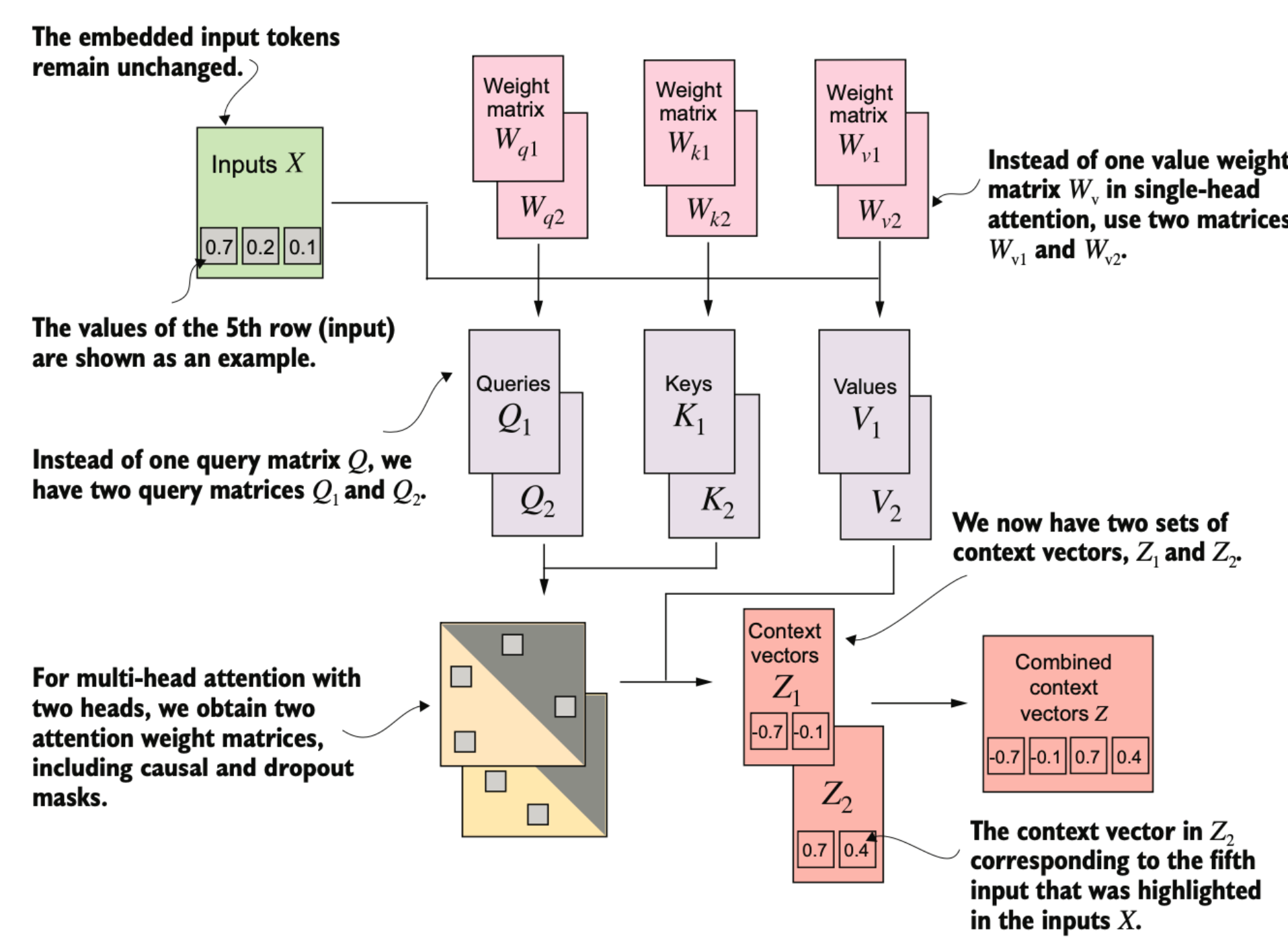

作为LLM应用的一种,我们可以将预训练好的模型进一步微调,使其具有多用途性并能完成输入的指令 As an application of LLMs, we can further fine-tune the pre-trained model to make it more versatile and capable of completing given instructions.